Getting a streaming service live is a clear milestone. Understanding why streaming services stall after launch is a different challenge, and one that many platforms are not well set up to support.

By Leyra Team

Most streaming services don't fail at launch. They plateau shortly after it.

In the months that follow launch, attention shifts from delivery to performance. Subscriber numbers begin to build, and the focus turns to how the service is actually behaving. The priority becomes continued growth, which depends on iteration. This is often the point where momentum starts to slow and platforms begin to show their limits.

According to industry data, average monthly churn in the US across major streaming services has risen from around 2% in 2019 to 5.5% by early 2025. That shift is not only about audiences becoming harder to please. It reflects a market where switching is easy, catalogues compete for the same attention, and retention depends on how consistently a service improves once it is live, not just how well it launched.

Why streaming services stall after launch

Growth in streaming is more connected than it first appears. Content discovery is a good example. Conviva's 2025 State of Digital Experience report found that 93% of viewers who have a positive experience with a streaming service return within seven days. The reverse matters too. A poor discovery experience can end a session and reduce the chance of a viewer returning.

Improving discovery requires teams to measure where users stall, test changes to layout, carousels, and recommendation logic, and do so on a rolling basis rather than in periodic updates.

The same logic applies across the user lifecycle. Decisions made during onboarding can influence retention weeks later. Pricing changes may affect some customers in ways that are not immediately obvious. And a cohort that looks healthy on total viewing time may be concentrating that time on a single title, which becomes a churn risk once that title is exhausted.

Consistent growth comes from making smaller, better decisions more frequently across all of these areas, and course-correcting when something does not land as expected. That requires a clear view of user behaviour across the full lifecycle, alongside the ability to test and adjust without significant overhead each time.

Why knowing what to fix isn’t the hard part

Teams working on post-launch growth tend to have a clear picture of what needs improving. First-session drop-off is higher than it should be. A segment of users watches once and never returns. Churn spikes at a predictable point in the subscription cycle, but the signal arrives too late to respond effectively. Most teams already know where the problems are. The difficulty is acting on them quickly enough to make a difference.

Data often sits across systems that were not built to work together. A change to recommendation logic requires engineering time that was not planned for. A pricing test that should take a week ends up taking a month to coordinate. By the time results come back, the window to respond has often closed.

For services that do manage to close that gap, the impact is usually visible quite quickly. Campaigns tied to specific moments, targeted offers, or win-back initiatives can drive clear spikes in engagement and subscriber growth when they are executed and adjusted in real time.

Splatter, a horror-focused streaming service, takes this approach around key moments like Halloween. By running targeted promotions and re-engagement campaigns, and tracking their performance as they go, the team is able to refine what works while the campaign is still active. What makes this effective is the ability to act on performance data without delay.

This is where growth starts to stall in practice. More often than not, the problem is the friction involved in making changes consistently.

When the platform starts to slow you down

Before launch, platform conversations often focus on feature coverage. After launch, the more important question is how much effort it takes to use those capabilities on an ongoing basis.

Some of the questions worth asking directly:

- Can changes to homepage layouts or content carousels be made without raising a ticket?

- Can you identify which subscriber cohort is most at risk this month and target them with a specific retention offer?

- Can pricing or packaging be adjusted in response to user behaviour without a full release cycle?

- Is behavioural data accessible in a single view, or does building that picture require pulling from multiple systems?

These are not advanced requirements. For a service trying to improve consistently, they are THE baseline for maintaining momentum. When the answers involve more time and coordination than the team can absorb, the platform begins to limit what is possible, regardless of how well it supported the initial launch.

How growing streaming services actually operate day to day

Services that continue to grow after launch tend to share certain operational characteristics. They close the loop between data and action, and treat improvements as part of normal operations rather than separate projects. Teams have enough visibility into subscriber behaviour to recognise patterns early, rather than reacting once churn has already happened.

That timing matters. A service reviewing performance quarterly is always working with a delay. By the time a trend is confirmed and a response is agreed, the users in question have often already made a decision.

The services that sustain growth tend to reduce that lag. Iteration becomes part of how they operate week to week, rather than something that happens occasionally.

What to look for in an OTT platform

For operators assessing their setup, or choosing a platform for the first time, the key question is how well the platform supports ongoing change after launch. That includes how easily teams can access and act on data, how quickly they can implement and test updates, and how much effort is involved in adapting the service as requirements evolve. This is often why streaming services stall after launch.

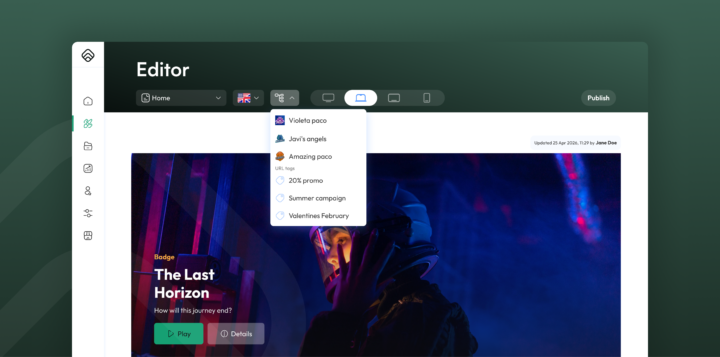

We built Leyra around this challenge. It reduces the distance between insight and action, so teams can test changes, respond to user behaviour, and adjust the service without long development cycles or coordination overhead.

At the core, Leyra brings together the key parts of running a streaming service, from apps and content management through to monetisation and subscriber operations, in a way that works as a connected system rather than a set of separate tools. That makes it easier to see what is happening and act on it without stitching workflows together across multiple platforms.

At the same time, we designed it to stay flexible. Through our marketplace, teams can introduce new tools, partners, or capabilities as their needs evolve, without having to rebuild around each change.

In practice, that might mean refining how content is surfaced during a campaign, testing a new offer for a specific segment, or responding more quickly when engagement starts to drop. What matters is being able to make those changes while they still matter. The aim is to keep teams focused on improving the service itself, instead of working around the platform to do it.

Ultimately, growth after launch is an ongoing process. The platform underneath a service either supports that process or slows it down, and over time that difference has a noticeable impact on what a service is able to achieve.

If you would like to talk about growth after launch, get in touch with Leyra or book a demo to see how we support streaming services in practice. You can also follow us on LinkedIn for the latest updates, articles, and company news.